资源简介

adaboost 演示demo(基于Matlab,学习算法包括决策树、神经网络、线性回归、在线贝叶斯分类器等),动态GUI显示学习过程、vote过程等

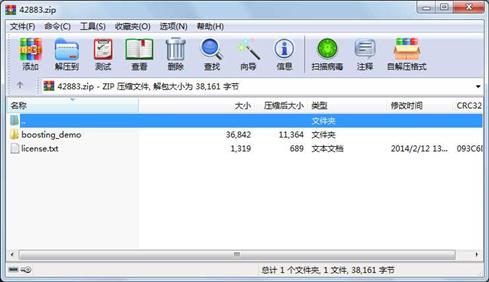

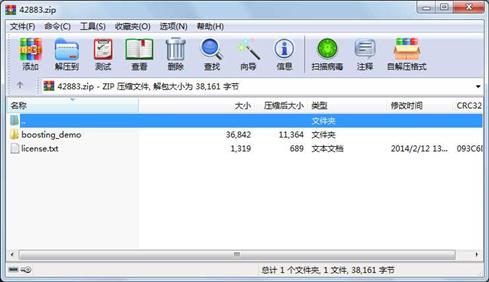

代码片段和文件信息

classdef Adaboost < handle

%Adaboost

properties

k_max;

k_next;

k_cur;

part_trains;

base_learner;

learners;

learner_weights;

early_terminate;

d_weights;

d_size;

end

methods

function a = Adaboost(k_max base_learner early_terminate)

%k-max: Size of the model integer >= 1

%base_learner: Model constructor function e.g. @()CART()

%The model must be an object with methods a.train(inputs outputs)

%and outputs = a.test(inputs).

%early_terminate: Optional detemines whether to terminate if base

%learner error is greater than 0.5 (default is to continue and the

%base learner receives a negative weight).

if (nargin < 3)

early_terminate = false;

end

a.k_max = k_max;

a.part_trains = a.k_max;

a.k_next = a.k_max;

a.learners = cell(a.k_max 1);

a.learner_weights = zeros(a.k_max 1);

a.base_learner = base_learner;

a.early_terminate = early_terminate;

a.k_cur = 0;

end

function part_train(a inputs outputs k_next)

a.k_next = k_next;

a.train(inputs outputs);

a.k_next = a.k_max;

end

function train(a inputs outputs)

if (a.k_cur == 0)

a.d_size = size(inputs 1);

a.d_weights = ones(a.d_size 1) ./ a.d_size;

end

while (a.k_cur < a.k_next)

a.k_cur = a.k_cur + 1;

%Create an NxN square of cumulatively summed weights (i.e. N

%rows containing the weight cumsum). Create an NxN square of random

%numbers (N columns containing the same N random numbers). Compare

%them and sum the rows. The number of weights that are less than

%each random number indicates the index sampled by that row.

indices = sum(repmat(cumsum(a.d_weights)‘ a.d_size 1) <= ...

repmat(rand(a.d_size 1) 1 a.d_size) 2) + 1;

a.learners{a.k_cur} = a.base_learner();

a.learners{a.k_cur}.train(inputs(indices :) outputs(indices));

predictions = a.learners{a.k_cur}.test(inputs) == outputs;

weighted_error = sum(~predictions .* a.d_weights);

if (a.early_terminate && weighted_error > 0.5)

a.k_max = a.k_cur - 1;

a.k_cur = a.k_cur - 1;

a.learner_weights = a.learner_weights(1:a.k_max);

break;

end

weighted_error = min(max(weighted_error 0.01) 0.99);

a.learner_weights(a.k_cur) = 0.5 * log((1 - weighted_error) / weighted_error);

a.d_weights = feval(@(x)x./sum(x) a.d_weights .* ...

exp(-a.learner_weights(a.k_cur) * ssign(predictions)));

end

end

function outputs = test(a inputs)

outputs = ssign(a.margins(inputs));

end

function margins = margins(a inputs)

margins = cell2mat(arrayfun(@(x)a.learners{x}.test(i 属性 大小 日期 时间 名称

----------- --------- ---------- ----- ----

文件 323 2010-11-02 15:09 boosting_demo\ssign.m

文件 1189 2010-11-02 15:10 boosting_demo\NeuralNetwork.m

文件 2619 2010-11-02 15:06 boosting_demo\OnlineNaiveBayes.m

文件 18577 2010-11-02 15:02 boosting_demo\boosting_demo.m

文件 692 2010-11-02 15:10 boosting_demo\SVM.m

文件 5002 2010-11-02 15:06 boosting_demo\LinearRegression.m

文件 784 2010-11-02 15:03 boosting_demo\CART.m

文件 3095 2010-11-02 15:05 boosting_demo\DataGen.m

文件 3166 2010-11-02 15:02 boosting_demo\Adaboost.m

文件 1395 2010-11-02 15:10 boosting_demo\Stump.m

文件 1319 2014-02-12 13:18 license.txt

相关资源

- 斯坦福机器学习编程作业machine-learn

- MATLAB与机器学习

- MACHINE_LEARNING_with_NEURAL_NETWORKS_using_MA

- 交替方向乘子法ADMM算法的matlab代码

- 机器学习 : 实用案例解析 mobi格式

- 带操作界面GUI的字母识别-MATLAB程序

- matlab流形学习算法工具包&matlab机器学

- 机器学习工具包spider工具包

- MATLAB与机器学习 李三平 陈建平译 译

- 机器学习Lasso回归重要论文和Matlab代码

- RVM-MATLAB-V1.3.zip

- 机器学习高斯混合模型资料总结含m

- Coursera吴恩达机器学习课程作业资料

- KNN算法训练MNIST和CIFAR数据集

- Matlab Deep learning 2017年新书

- 机器学习与MATLAB代码

- matlab与机器学习代码

- 机器学习课程设计《基于朴素贝叶斯

- usps手写数字数据集

- cifar-10 数据集 MATLAB版本

- 斯坦福大学CS229机器学习完整详细笔记

- MATLAB神经网络43个案例分析源码

- MATLAB神经网络30个案例分析全书+源代

- 斯坦福机器学习公开课CS229讲义作业及

- 机器学习基础,配套代码

- 机器学习:近20种人工神经网络模型m

- 机器学及其matlab实现—从基础到实践

- mnist的mat格式数据

- 《MATLAB神经网络43个案例分析》源代码

- 机器学习基础教程 matlab代码+数据

川公网安备 51152502000135号

川公网安备 51152502000135号

评论

共有 条评论